The Signal: Digital Preservation

A Different View of Viewshare

This is a guest post from Camille Salas, a summer intern with the Library of Congress. If you want Camille to help you out with creating a Viewshare, let us know in the comments.

In early July I had the opportunity to work with reference librarian, Erika Spencer, who works in the European Division at the Library of Congress. Erika was interested in creating a Viewshare of Russian digital collections. We met several times to discuss the platform and how it might serve to display her collection. We also worked together to develop several iterations of a view prior to completing the current version that can be found here. What follows is an interview with Erika describing the process of creating a view and what we both learned from our experience.

Erika Spencer, European Division, Library of Congress

Camille: How did you first hear about Viewshare?

Erika: I heard about Viewshare from Bert Lyons, a colleague at the Library who had been working with me in designing a database. Bert mentioned Viewshare as one of several other open source tools. Not long after our conversation, I saw that Trevor Owens was giving a presentation on Viewshare. I attended the presentation and spoke with Trevor about my Russian collection and decided to give it a try.

Camille: Please tell us a little bit about the collection and how it relates to your work at the Library.

Erika: What I created is really just an inventory that lists collections of Russian material that has been digitized and is free and open for public viewing. Most of the collections are self-contained and housed in other libraries and cultural institutions. It could be seen as a glorified finding aid that has as many digitized collections of Russian material as I could find (starting with North American repositories).

Erika: I did this as a service to our researchers of course, but also in the hopes that the field of Slavic librarianship would use it as a type of clearinghouse – informing librarians as to what is being digitized and generally, what is freely available on the web without spending an afternoon searching. In this capacity, it might decrease duplicate initiatives to scan certain material. This is also a way to publicize smaller collections of valuable and often unusual material. The collections I’ve gathered are often small, generally more subject-focused and I see them as getting potentially lost in the fold. I hope to make them easier to find by putting them together like this.

Viewshare of Russian Digital Collections

Camille: How were you were organizing the collection prior to our first meeting and how you were planning on displaying it?

Erika: Initially, I compiled all my data in a spreadsheet. Then I got the idea to try to create my own database, which is where Bert became instrumental in helping me to reorganize my data so it would function well. We built a relational database that provided faceted browsing, search capabilities, and the capacity to update and insert data iteratively or in batches. But because of system security restrictions, we were unable to open the database to the public for access. That’s when I turned to Viewshare because it was something I could do on my own and could serve the basic purpose of getting information on the web.

Camille: During our first meeting, we looked at some Viewshare examples and we also looked at the CSV file that contained information from the database you were using. We discussed the metadata from the collection and the type of information you hoped users would access from it. Based on your description and the CSV file, I observed that the data you wanted to display was only a portion of what was in the file. As a result, I edited the file into a simpler spreadsheet and created an initial view that I showed you in a subsequent meeting. Upon review, it seemed as though you had a better sense of Viewshare’s capabilities and potential. Can you describe your first impressions of Viewshare and how that might have changed throughout the process?

Erika: Viewshare stands out primarily as much more visual than most of the tools I’ve worked with as a librarian. I’m not sure librarians in my field will be used to such a visual way of relaying information, but I do think that we are moving toward that, in the world of technology at least, so I was open to it. I found Viewshare augmented my data in a way that I didn’t really foresee but was definitely positive. It became more intuitive once I had gone over a couple different views with you and the process of rebuilding and getting the hang of how you want the data to look “going in” to achieve the desired end result. You need to play around with it, but that can be a good mental exercise and help to clarify what you think your users will really find helpful.

Camille: After creating the current version of the collection, you mentioned that you are hoping to update it as needed. Do you feel that Viewshare will be an easy way for you to accomplish the updates or do you have any concerns about updating the spreadsheet or your view?

Erika: I have no concerns about the technical process and since my collection is pretty small, it uploads quickly. But to be honest, the fact that I have to recreate the views all over again after uploading will probably cause me to wait until I have a sizable number of updates so I can do them all at once. That is not ideal as I was hoping I could just add data as a new entry to the existing view. But on the plus side, at least I can do it myself, which is sometimes the biggest barrier to getting things done in a large institution.

Camille: Do you have any future ideas and/or plans for views of other collections?

Erika: I will probably see how this one is received before I experiment more because the bottom line for me is how much people use it and that remains to be seen. If it becomes a useful tool for my colleagues, I will definitely turn to Viewshare for future projects-perhaps with other librarians. For me, it’s all about the critical mass. In other words it is only as good as the number of people who know about and utilize it.

Camille: I really enjoyed the opportunity to work with you on this, Erika! As a former IT consultant, it reminded me of how important it is to ensure that the client is happy with the end result. The experience demonstrated how each user has different needs, and Viewshare can be a flexible tool that serves as a simple solution for a seemingly complex problem. Given your experience, do you have any thoughts on what kind of additional features you would like to see in Viewshare that might help other users?

Erika: Likewise, Camille. I thoroughly enjoyed working on this with you and felt enormously fortunate to have such a knowledgeable and helpful guide! As to features down the road, obviously, an easier way to update would be nice. I would like to see a more intuitive way for people to search for your Viewshare collection, even if it is just an interactive list of projects or users. Currently I have to give a specific URL that leads to my collection, which is not as easy as just saying you can find it from the Viewshare homepage. Still, I think at the very least Viewshare is an easy, visually pleasing way to share data online. From a fairly pragmatic standpoint, it had the effect of challenging how I thought about displaying my information (or information in general). Although this was not in the forefront of my mind when I turned to Viewshare, I feel it is something worth considering because ideally, new technologies and web applications will not just give us easier access to information, but also different ways of presenting that information to our users.

WARCreate and Future Stewardship: An interview with Mat Kelly

The five recipients of the inaugural NDSA innovation awards are exemplars of the creativity, diversity, and collaboration essential to supporting the digital community as it works to preserve and make available digital materials. In an effort to learn more and share the work of the individuals, projects and institutions who won these awards I am excited to start the first, of what I hope to be a series, of interviews with the award winners.

Mat Kelly is a Mobile Applications Developer and Programmer at NASA Langley Research Center and a Graduate Student in Computer Science at Old Dominion University. The awards recognized him for his work on WARCreate, a Google Chrome extension that allows users to create a Web ARChive (WARC) file from any browseable webpage.

Trevor: Could you tell us about WARCreate? Specifically, about what the projects goals are, what problems it was designed to ameliorate and how it fits into a larger set of ideas about web archiving?

Mat: WARCreate’s primary purpose is to provide the facilities to allow users to easily preserve content on the web to which they would otherwise have to resort to ad hoc means (e.g., a browser’s “save page as…” function). The focus of the project is to capture content on social media networks but really it is about giving the power to preserve to those whom the preservation of the content would matter most and whom the content is often about (think: Facebook). A secondary goal of the tool is to bring the facilities of institutional archiving (e.g., WARC, wayback) to personal web archiving so some of the advances in the field can be enjoyed by professional and amateur archivists alike. This has not been easy, as some ideas have had to be shoehorned to work with conventional web archiving technologies but with each consideration I hope to make personal web archiving a task that is not daunting to a casual user.

Trevor: Where did the idea for this project come from?

Mat: I initially worked on a similar yet vastly different software project called Archive Facebook, which I presented at the NDIIPP – NDSA digital preservation partners meeting. Exploring the methods and output of this tool made me dig deeper into what has been done to overcome the problem of preserving content that users feel is important and is otherwise difficult or impossible to preserve. While the Bergman’s work made me aware of the vastness of content inaccessible to crawlers, the work of Cathy Marshall brought me up to speed with more contemporary concerns and how casual users go about accomplish personal web archiving. These two authors and many others between the time of their respective publications served as motivation to help overcome the issues that users in Marshall’s work faced as well as capture the content that Bergman implicitly said was difficult to preserve.

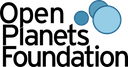

Web content inaccessible to crawlers is many times larger than content that crawlers, including Heritrix, can reach and is frequently not preserved because of this. WARCreate’s method of capturing any page that the user can see allows the archivable content, a superset Heritrix’s archivable content, to be preserved.

Trevor: What have you learned through working on WARCreate? Are there things about either the process and goals of web archiving or about developing software tools to support digital preservation?

Mat: My exposure to the WARC format prior to developing WARCreate was limited. Through some use cases in the Internet Archive driven Archive-It service, I learned that many would like means to archive but that it cannot be so complicated as to require users to dedicate a lot of time in overcoming the learning curve of new software and formats. Through discussing the project in the early stages with both professional preservationists and casual PC users alike, I was made aware of some needs and concerns of users but also the desire for new tools to be interoperable with tools currently used for archiving. This was the premise of utilizing the WARC format – it is an ISO standard and utilized by one of the more popular end-user systems, the Wayback Machine. Giving exposure to tools like the open source Wayback, the Memento framework, the XAMPP client-side server package and the like is a sort of byproduct of developing a tool with integration in mind. I want to make it useful and easy for casual users while taking advantage of the formats and tools with which professional web archivists are already familiar. I am constantly learning what the user wants by developing the evolving project while trying to keep scope creep at bay by modularizing all of the components to ensure that those that want to simply create WARCs without the extra integration will be able to do so by using WARCreate.

Trevor: What attracted you to digital preservation as an area of study? Further, do you have any thoughts for how we can get more computer scientists and computer science students interested in working on problems related to digital preservation?

Mat: Old Dominion University has an excellent web science & digital libraries group and their academic, research and post-graduation success attracted me to the group. In past endeavors, I had worked with some extremely niche projects. With personal digital archiving being a subject that “fell between the cracks” for a long time with different tools and processes for individuals and organization, creating a tool like WARCreate was an opportunity to merge the preservation software environments. One problem in computer science is that there is a tendency to think that Google has solved all open problems involving the web, which isn’t true, especially in respect to preservation. With increased research funding, such as the NSF/NIH Big Data program, more attention is being drawn to the problem and stress that preservation is a necessary pre-condition to data mining and use.

Trevor: Did you find any of the sessions at the Digital Preservation 2012 conference particularly interesting or valuable for thinking about your work? If so, please elaborate on what about them intrigued you or connects with how you are thinking about your work?

Mat: Quite a few of the sessions were valuable and interesting but a select set stuck out as being helpful to my research into personal web archiving. Anil Dash’s talk about content in relation to open formats and Michael Carroll’s description of fair use are going to be excellent starting points when I consider the advantages and ramifications that WARCreate has for a user and the user from which they may be trying to preserve their content. I loved the poster and demo sessions, as they started the wheels spinning on how some of ideas in these concrete implementations are helpful to preservationists and how I should consider these concerns when developing archiving tools in the future.

A Day Camp for Digital Preservation

On July 26, 2012, the Library of Congress hosted CURATEcamp Processing: Processing Data/Processing Collections.

The idea to hold a CurateCamp had been percolating for some time, but the event really came about through a fortuitously timed conversation with our colleague Meg Phillips at the National Archives and Records Administration, and the interest of our colleague Mark Matienzo, a digital archivist at Yale University Library. Throw in a lot of enthusiasm from my Library of Congress colleagues Trevor Owens and Jefferson Bailey, and CurateCamp Processing 2012 was on!

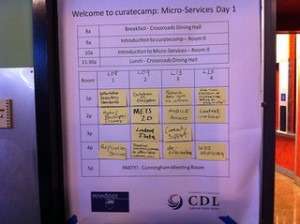

Suggesting topics and setting the schedule at CurateCamp Processing. Photo by Leslie Johnston

This was an “unconference,” a meeting where a theme is announced beforehand but the sessions and schedule are set collaboratively by the participants at the meeting. The Library hosted a small unconference before – one of the series of CRIG RepoCamps in 2008 — but this the first unconference that the Library has organized.

And by organized, I mean threw open the doors.

Of course, it’s not strictly speaking true that this took no organizing. We identified a theme, set up a section on the CurateCamp wiki, suggested topics, promoted the event, and asked that anyone signing up think about topics and write about them in their registration.

Once we all arrived, anyone with a session idea wrote down a title and a short description on a piece of paper and taped it to a schedule grid on the wall. These were then reviewed, combined where appropriate, rearranged and horse-traded until there was agreement on a full final schedule. More than half the sessions on the schedule have links through to notes from the session (I confess I am behind on getting my notes uploaded). Lunchtime was dedicated to lightning talks.

Many associate unconferences with software development or geeky topics, and might be afraid to attend. But CurateCamp? It was first and foremost a good old-fashioned exploration of issues and ideas, such as:

- What is appraisal for born-digital collections?

- What is the minimum processing needed for born-digital collections to make them available to researchers as quickly as possible?

- What are the most useful/usable formats for those materials for researchers? Do we give them authenticated ISO disk images? Or migrate formats for usability? Do we let them work with the original media?

- If we have one workflow for processing the analog items in a collections and a different one for the born-digital items, how do pull together the description and discovery of the various components?

- What are the core significant properties that we need to document to preserve born-digital collections? How do we extract them from files?

- And how can we identify and extract key “entity” information such as names and events/dates and places?

- And for places, how do we track changes in place names (and boundaries) over time? And use that data to provide more map-based interfaces to collections?

- How can we automate the discovery of Personal Identifying Information in born-digital collections and redact it, if necessary?

- How do we provide counts for these collections? Storage size? Files? Items?

Breakout session at CurateCamp Processing. Photo by Leslie Johnston

And discuss these issues we did.

In every session I was in, there was a lively discussion that involved archivists and technologists. People talked about projects they had attempted, leading to both success and failure. People discussed the relative merits of different tools they had worked with. Technologists heard about the requirements and concerns of archivists about processing and making born-digital collections available. And archivists heard what might or might not be technically feasible. Check out the linked notes from the session on the wiki to read more.

No code was written (that I know of), but there was a lot of exchange of ideas in a group that included archivists, museum curators and registrars, librarians, and technologists. I heard a lot of “Have you tried…?” and “Do you know about…?” and “How about if we try this?” and “I didn’t know about this before…” and “Thanks for sharing your work.” And of course, “It was so great to meet you.”

And there were lightning talks.

- Trevor Owens reporting on Tim Sherratt’s project “The Real Face of White Australia”

- Monique Politowski on the NARA/Ancentry.com records digitization partnership

- Cal Lee on the BitCurator project

- Brett Abrams discussing issues in accessioning databases

- Jane Zhang on the issues of providing archival context for digital content

- Jeanne Kramer-Smyth on rescuing content from de-commissioned systems

- Michael Levy on the Blacklight implementaiton at the U.S. Holocaust Memorial Museum

- Mark Matienzo on integrating tools for analyzing legacy media/filesystsms

- Mitch Brodsky on the New York Philharmonic Archive discovery interface.

And that’s what CurateCamps are about. Not necessarily all about the coding. It’s about participation and conversation. And collaboration. And developing a community.

I have to share the great writeup that Jeanne Kramer-Smyth has on her Spellbound blog. Thanks for your participation that day!

The August 2012 Library of Congress Digital Preservation Newsletter is now available

The August 2012 Library of Congress Digital Preservation Newsletter is now available.

http://www.digitalpreservation.gov/news/newsletter/201208.pdf

In this issue:

- Summary of DigitalPreservation 2012

- Rescuing the Tangible from the Intangible

- From AIP to Zettabyte: Comparing Glossaries

- One Family’s Digital Archiving Project

- Fighting the Battle for Fleeting Attention

- Profile of William Kilbride

- Training Digital Curators

- Upcoming Events (Designing Storage Architectures, NDIIPP at Book Festival and others)

- Meetings Roundup (Open Repositories, Preserving Online Science, Data Intensive Research)

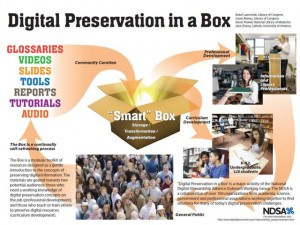

- Resources (Digital Disaster Planning, Digital Preservation in a Box, and others)

Digital Strategy Catches up With the Present: An Interview with Smithsonian’s Michael Edson

For this installment of Insights, the National Digital Stewardship Alliance Innovation Working Group’s ongoing series of interviews, I talk with Michael Edson, the Director of Web and New Media Strategy at the Smithsonian Institution. Edson gave a compelling talk at last year’s NDIIPP/NDSA conference, Let Us Go Boldly into the Present I’m excited to take this chance to talk through and discuss some of the key ideas in his vision for the role of digital media in cultural heritage institutions.

For this installment of Insights, the National Digital Stewardship Alliance Innovation Working Group’s ongoing series of interviews, I talk with Michael Edson, the Director of Web and New Media Strategy at the Smithsonian Institution. Edson gave a compelling talk at last year’s NDIIPP/NDSA conference, Let Us Go Boldly into the Present I’m excited to take this chance to talk through and discuss some of the key ideas in his vision for the role of digital media in cultural heritage institutions.

It looks like you keep revising and expanding your talk, Let Us Go Boldly into the Present, could you give us a quick abstract or brief run-through of what your argument is in this talk?

Michael: You’re right, I have been updating and revising this talk! It’s a set of ideas I feel very strongly about, that I want to share and make better.

The basic idea of the talk is that there is enormous value to be had in the technology platform we have available to us today. We don’t have to wait anymore for some new technology to appear or mature. We don’t have to wait to see if social media and crowdsourcing and mobile data in the cloud are going to add up to anything useful. It’s happened. These things are real, now today. And we’d better get busy. If we want to do justice to our missions—our audacious and important missions in society—we’d better get busy. We need to change our collective mindset from “let’s be cautious and wait to see how things are going to turn out before we commit” to “Let’s place the bet. Let’s get it done.” Hence the title of the talk, “Let us go boldly into the present.”

Let me give you an example. Five or ten years ago we had people like Don Tapscott and Anthony Williams telling us that the future would be owned— “pwned” if I may— by disruptive new ways of working, ways of thinking about work, that took advantage of crowdsourcing (though I don’t think they called it that) or distributed networks collaborating without much central control, like wikipedia. Wikinomics was published in 2006. Those of us who read it, devoured it, and tried to spread the concepts up through our organizations were met with a fair amount of skepticism back then. Even though the arguments in wikinomics are meticulously and generously documented with real world examples, the world in which groups of strangers could work together without central control to advance the mission of a non-profit or increase shareholder value seemed a little…vague, to many people. It was easy to dismiss. Now, eight years later, kapow! Those ideas are tangibly, bankably real because we’ve done the work and shown how it succeeds. This wiki-like way of working is provably real, at scale, in our industry, and now it’s time to place the bet.

These are not fringe activities anymore. Mobile is not a fringe platform. Crowdsourcing is not a fringe activity. Social media is not a fringe activity. Open access is not a fringe activity. Or they shouldn’t be. They don’t deserve to be. These are serious workplace tools. But I think many organizations, used to a slower evolution and maturation of new ideas and platforms, haven’t noticed how quickly we’ve transitioned from theory to prototypes to practice to profit-however you want to define profit. I want organizations to notice what’s changed, what the new physics are, and to align resources and priorities accordingly.

The world needs memory institutions to succeed, to win big, at scale. There’s a lot at stake for our culture, our cultures, our species right now. And we’ve got to either win, now, or get out of the way.

Trevor: How have your ideas about digital strategy for cultural heritage organizations changed and evolved?

Michael: How long do you have?!

Now keep in mind that I’m not a policy maker or a spokesperson here. I’m just a strategy guy with an interesting vantage point.

I find myself focusing more and more on execution and scale recently. Learning how to be a “closer”, personally, and studying the different ways organizations execute successfully on their visions. And also how to orient ourselves towards projects, visions, strategies, that operate at a big scale-that really move the dial on the things we care about, not just for hundreds or thousands of people, but for millions and tens of millions of people. I’m disenchanted with the strategic change model of opportunistic low-risk tactical one-offs and I’m looking for ways we can work tactically towards big, big things.

I was talking with an astrophysicist at the Smithsonian Astrophysical Observatory a few weeks ago and he said they won’t even consider building a new instrument unless it offers a factor of 10x improvement over the last system. That means that with every new telescope they can see ten times farther back to the beginning of time. That’s kind of…bracing. I’d like to see that kind of dedication to scale and impact in the way we approach the other kinds of work we do. Andrew Ng at Stanford just taught his computer science course, online, to 100,000 students. He told the New York Times that he’d have to teach that course in a traditional classroom setting for 250 years to reach that many students. That’s scale.

We have about 20 million physical visits to the Smithsonian every year. Are we going to be able to double that? Triple that? No. Never. Could we triple our reach and impact online? Quadruple it? See a 10x increase? Yes.

Trevor: Can you tell us a bit about some of your early digital projects at Smithsonian? I’m interested in getting a sense of what kinds of change you have seen in your time working on cultural heritage digital media.

Michael: Early on, say, in the late 1990’s, there wasn’t much knowledge and experience with new media in our organizations. Most of us were still trying to figure out how to run Wordperfect macros or get email with Lotus Notes. The idea of half the people in your office having a 32GB iPhone 4s was just, ridiculous. So we spent a lot of strategy-making hours rolling up our sleeves and learning geeky stuff and trying to figure out what it all meant, where it would lead.

I remember doing a visitor orientation kiosk for the Freer Gallery of Art in 1995. Most people coming in the door had never seen a touch-screen kiosk before. Most older visitors had never held a mouse before. Nobody knew what to expect from glowing screens in a museum lobby. I had to teach myself programming, Lingo, photoshop, Macromedia Director, Soundforge, all this insanity. It was just the wild wild west. The strategy was to just wrap your arms around some time and money, somehow, and do something and show it to someone. To pursue the case. To learn something. To connect with somebody.

I also remember teaching myself Perl to build a photo-uploading and sharing website for an exhibition at the Sackler gallery. There was no Flickr then, so I wrote something, and somehow it worked, and it was so gratifying to see people uploading their travel photos of India and telling us about their experiences. And there weren’t a lot of users who knew how to scan and upload photos back then-there weren’t even that many people on the Internet! Everything is so much easier now, which makes strategy so much more important. When you can do anything for almost zero cost and with almost zero technical skill, you need some strategic vision to help you align the tactical opportunities towards some coherent long-term goal.

Trevor: I’m curious to hear a bit about what you think has changed since those early days, aside from advancing technology, do you think staff’s approach to technology is changing as well?

Michael: One thing I’ve noticed recently is how good some of our web and new media practitioners have gotten. How experienced and competent and wise they are now. This is a big change that kind of snuck up on us. It’s certainly snuck up on most managers I think. All of a sudden they have these total professionals under them—maybe it’s happened without them even noticing! I worry that we’re going to lose a lot of talented, self-starting fast learners in our industry if we don’t recognize this emerging, or emerged, talent at the bottom and middle of our organizations. I spend a lot of time working to call attention to these individuals, to get them some recognition and resources and decision authority, and a framework of policy and platforms to help them have an impact.

I was at a web strategy workshop for cultural organizations in Latin America recently, sitting around a conference table with 5 or 10 of my colleagues from throughout the Smithsonian—webmasters, social media coordinators, the people working with the public day-in and day-out on the web—and we were hashing through some of the challenges of managing public relations through social media. And I was gobsmacked, just totally blown away by how much my peers knew about how to do this stuff now. What works and what doesn’t. It was a level of wisdom equal to anything I’ve seen in the industry, and it was the kind of wisdom that can only be borne through experience.

These people had quietly and humbly put in their Malcolm Gladwell 10,000 hours and they knew things. Really smart things. And I see this happening in almost every organization I’ve studied or visited with. When you’ve got that kind of talent emerging within your organization, explosive creative growth can happen, and smart leaders get out of the way. In that kind of environment he job of strategy should change from high-falutin philosophy to execution. Execution. Getting stuff done for the mission, for the public we serve.

So very many things have changed, but the hardest and most important aspects of strategy have remained constant, and remained difficult—I don’t think I’ll ever master them! How to lead. When to push and when to be patient. How to surface and confront difficult ideas in a constructive and non-threatening way, but still to surface and confront them—to press the case. How to help change happen within large, complex organizations. How to build a sense of urgency and a shared vision around mission and progress. How to close the gap between those protecting the status quo and those who want to disrupt.

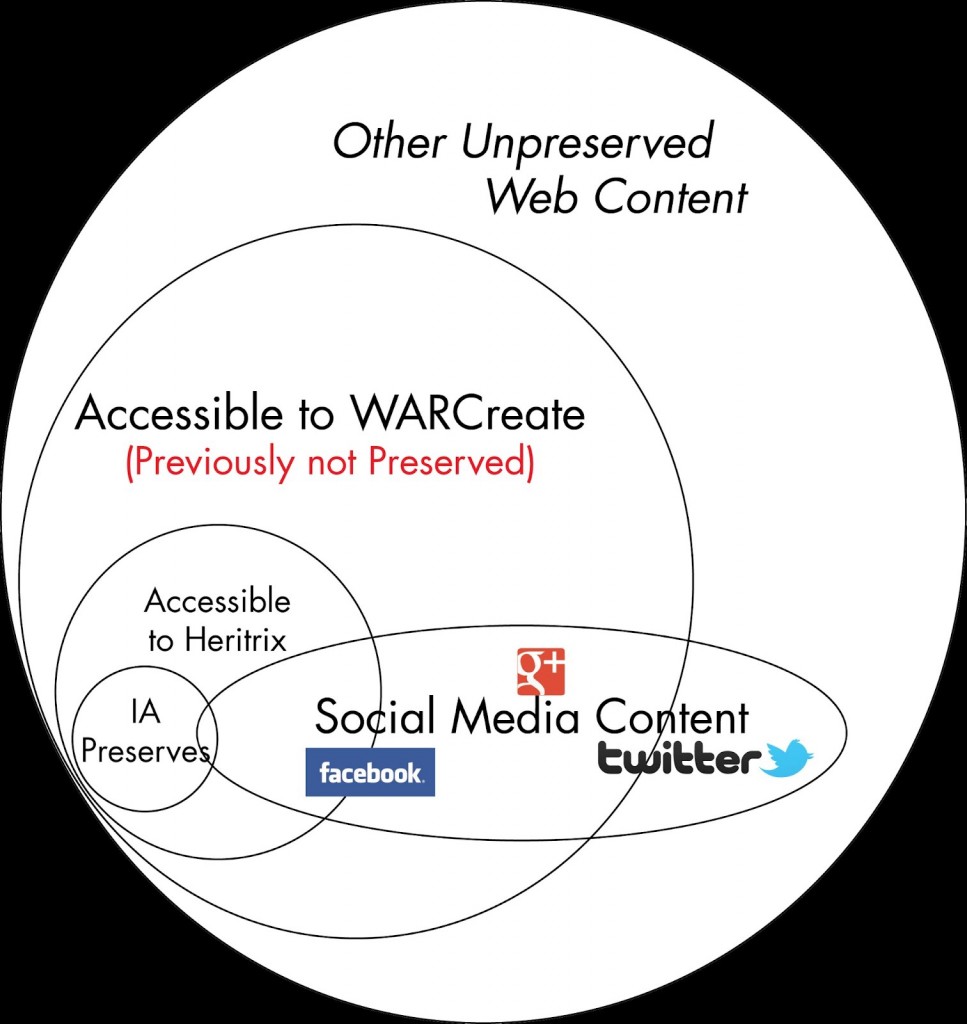

Trevor: In your talk you described an Alien auditor who comes down and looks over how cultural heritage organizations are organized and how resources are allocated. Could you tell us a bit about what you think the Alien auditor would tell us about many of our cultural heritage organizations approaches to new media?

Michael: It all comes down to whether or not we’re going to really embrace new media, and the new kinds of behaviors and group actions that come with it, as a fundamental, foundational aspect of pursuing our missions.

This Extraterrestrial Space Auditor is a thought experiment I came up with to help organizations look more closely at what they were doing—and not doing—with digital media and why. In the early adoption phases of new media initiatives when the inputs and outputs are less clear-things are more experimental. There’s a certain feeling you get in an organization when they’re leaning into important, mission-critical work—there are things you notice when it’s succeeding that don’t show up as line items in the annual report at first. Scott Berkun talks about this in The Myths of Innovation quite a bit.

The basic idea of the Extraterrestrial Space Auditor is to put yourself in the mindset of an auditor from outer space—from way out of town, so to speak—with no bias or assumptions about your organization’s prestige or presumed value in society. The extraterrestrial Space Auditor’s only job is to look at your organization and compare its stated mission with what you are actually doing every day: how you’re spending your time, investing resources, hiring, firing, the kinds of meetings you’re having, the pace and velocity in the organization—the outcomes you’re achieving in society. Are you using all the tools at your disposal, and are you using them well?

Now, of course, technology, new media, and the new kinds of behaviour and group work they make possible are the subtext of this thought experiment. How can we use new media to advance our missions—to accomplish more of the good things we’re supposed to be doing in society, better and faster and with more impact? I think most, but not all, of our cultural/heritage organizations-organizations and businesses of all kinds really—don’t measure up as well as they could or should.

Many organizations feel that new media, social media, mobile platforms, even basic services delivered through straightforward web 1.0 websites, are nice additions to the 20th century modus operandi, but they’re not considered to be critical. They’re not considered to be as good, as valid, as a museum visit, or a trip to the library. I’m constantly surprised by the number of organizations who have not thoroughly build the web—the Internet—into their DNA. Their sense of profit and loss.

Many web teams in museums, libraries, and archives, are starved for resources, starved for attention. The teams, the individuals in the teams, are on the hunt, on the move, they’ve figured out how to pursue the mission in dramatic and innovative ways, they’re doing interesting things within their own limited spheres of influence, but it often feels very tentative from an institutional perspective. We say that the web, technology, the Internet, are important, but too often, an impartial observer would logically conclude that we can and should be doing more. We say in our Smithsonian web and new media strategy that “some re-balancing of resources and priorities will be required.”

Back in 2009 some volunteers did person-on-the-street video interviews with Smithsonian museum visitors, and they asked the visitors what they wanted from Smithsonian websites, and almost all the visitors said they wanted—expected—all of the Smithsonian’s 137 million objects to be online, for free, in high resolution, in 3-d, with a video about the object by a curator. That was their basic expectation. It’s going to take a while to fulfill that expectation, we’re working every day to get the most important materials online first. There’s no time like the present to get started.

Trevor: One of your themes is the idea of ramps and loading docks as key metaphors for defining the digital strategy of a cultural heritage organization. Could you unpack what you mean by these terms and explain your reasoning behind their value?

Michael: I don’t know that it needs to be a part of the strategy, per se, but the “on ramps and loading docks” pattern is a way for organizations to behave, tactically, if they believe that the organization needs to be a connector, a convener, and a catalyst, rather than an exclusive manufacturer—a monopoly.

If you believe you’re a monopoly you want to do everything yourself—you’re internally focused. You build structures and organizational habits around moving infrastructure, expertise, raw materials (collections, data) inside the organizational walls and you do the stuff you want to do, you build the end-products in toto, and you deliver them as final products in a one-way transaction to an audience. You build infrastructure and business rules and a culture of assumptions around that work model. This is like a highway with no on ramps, just a place where the pavement starts and finishes, and if you happen to live in the countryside the road goes by you’re out of luck—you can’t get on it. It’s like a factory with only one small door in it for letting workers in and letting products out, and that door is usually protected by a surly guard.

But if you believe that the best return on investment for society is to behave like a catalyst so that other people outside your organization can be successful, so that other people who don’t work directly for you can take your resources (or in the case of public institutions, can take the resources already paid for by taxpayers) and execute on your mission themselves by making a new product, inventing something, making new creative works, making a scientific breakthrough…then you need to build infrastructure, business rules, and a culture of assumptions around making it easy to get resources, ideas, expertise, data, knowledge, and attention in and out of your workgroups. This is like a highway with a lot access ramps, or a factory with a lot of entrances, comfortable well lit staff rooms, and a lot of big beefy loading docks to accept and move out all kinds of things.

And if you have a highway with a lot of on and off ramps, or a factory with a busy loading dock, you need to devote a lot of time to ensuring that those things work efficiently—you need to work at managing the infrastructure, the work habits and cultural assumptions of lots of people, things, ideas, data, resources moving through your organization to where they’re needed. Otherwise you have chaos. Or inevitable atrophy and failure.

So, all of this being said, ask yourself how easy is it to share raw materials within your organization? Or to share from inside to outside? How easy is it to share a 100MB data file with a colleague? How easy is it to bring a volunteer software developer on board? Can you get them a login ID and an email address? Is there a place for them to sit? Can you find a room to meet with 10 colleagues? 100?

All of these things constitute a kind of platform that makes it easier to get work done in the fast, collaborative, open environment I think we need to be working in. Not just a platform of servers and apps, but a comprehensive platform to make collaborative work of all kinds easier.

Trevor: You also focus on defining different roles, processes, responsibilities for groups working at the edge of an organization and groups working at the core. Could you talk us through why you think this is so important? Further, do you have any good examples for places that you think are doing this well, that have groups doing innovative work at the edge that is being scaled up, refined and integrated into the core?

Michael: In our our Web and New Media Strategy we say that the best innovation happens out at the edges of the Institution, where we have, close together, subject matter experts, collections and data, the public (which can include a 6th grader or a Nobel laureate, or both) and some degree of technology expertise. This is an intentional effort to embrace an edge innovation model here, and this kind of innovation doesn’t usually happen in central offices, it mostly happens out in the vast border habitat between “us” and “the public.”

But, we also say that the innovators out on the edges have reached the limits of what they can accomplish on their own, without a commons of shared tools, standards, and infrastructure. So the “innovation at the edges: a commons in the middle” strategy is a way of acknowledging, and turning into a feature, the need to balance autonomy and control within the organization. And I see this dynamic playing out everywhere I go, because it’s so easy to innovate at the edges now.

Once you’re headed down this path, you get into a situation where you need to build core competencies around identifying which innovations at the edges need to be brought into the central platform so they can scale, so you can get some network effects, and so you can relieve the innovators at the edges from the withering responsibility of maintaining servers and doing security and software maintenance and all those things that edge innovators are notoriously bad at and get bored with very quickly.

A long time ago we decided, somehow, that we weren’t going to ask our curators, our subject matter experts, our catalogers, to bring sacks of coal into work in the winter to heat their offices. I talked about this recently at a symposium for the National Heritage Board, National Library, and National Archives in Sweden…

We don’t ask researchers to blow their own light bulbs, or press their own copy paper from rags and wood pulp. There’s a big platform that central service organizations have agreed to perform to free up time and energy to use for mission related work. That same process needs to happen, habitually and intentionally, with the IT stack.

Edge-to-core is what happened, kind of haphazardly, when the World Wide Web came along. When computers came into the workplace. It’s happening now with drupal, with mobile, with intellectual property policy, the public domain, and the creative commons. We’re getting better at it, and that’s a good thing. There’s going to be a ceaseless torrent of edge innovation being injected into our organizations for years to come. That process might— will — change the way we think about traditional organizations and what they can and can’t do. It’s going to be a restless and exciting time.

Trevor: Where do you think the home should be for digital media in a cultural heritage organization? Or, how do you think one should divide up roles and responsibilities when digital is increasingly becoming a key part of nearly every part of cultural heritage organizations? We are increasingly acquiring, preserving and exhibiting born-digital and digitized materials, using social media for outreach and public relations, supporting researchers and fielding reference questions through digital channels, and supporting all of that work with a substantive IT infrastructure. Who should be whom’s ramp and loading doc?

Michael: Hah! I see the same pattern being acted out almost everywhere. I’ve written a little about this in “Good projects gone bad, an introduction to process maturity” from a session I did with the Getty’s Nik Honeysett at the American Association of Museums conference in 2008, and also in New Media, Technology, and Museums: Who’s in Charge? from AAM in 2009.

I think that everyone is moving along the same series of evolutionary plateaus, from a chaotic approach to organizing for new media to a “mature” one. This is modeled loosely on the “capability maturity model” way of thinking about organizational change. (I talk about this in depth in the “Good projects gone bad” powerpoint and the accompanying text document on slideshare.)

In the first level, the most basic level, the approach to new media governance and ownership is chaotic and opportunistic. Authority and responsibility is granted, often passively, to an arbitrary individual or workgroup within the organization. Good things can happen, but they rely on individual heroics, there’s little measurement, and successes are hard to repeat.

Level two is the “Emerging and Repeatable” level. You still have very small teams working pretty low down in the org chart, but there’s been an intentional management decision to place authority somewhere. You start to see some standards and business rules built around a few projects, but a lot is unmeasured and left undone.

In level three, you start to see a Director or CEO-level awareness of the new media as an explicit line-of-business, but authority is still in a somewhat arbitrary position in the org chart, and usually two or three steps from the executive suite. There’s usually general uncertainty about the true purpose and impact of new media in the organization, and there are a lot of struggles over decision authority and direction but you also start to see some organizational discipline around workflows, standards, costs, and outcomes.

Level four is “Quantitatively Managed.” You start to see the of new media departments, greater awareness of roles and responsibilities and the routine and predictable involvement of key stakeholders. At this level, new media has become an integral part of organization. You see formal organization and oversight, usually in the Director’s office to someone without specific background in new media but who has overarching knowledge of the organization and a lot of decision authority. Perhaps most notably you see increasing cross-disciplinary expertise/experience across the enterprise: the new media team is familiar and broadly competent with all areas of expertise across the organization, and visa-versa. Here’s where you start to have everyone sharing the same on ramps and loading docks!

In Level five, you have full-time, formal, professional management and visibility in the executive suite, everyone is focused on new media-not as something special, but as an integral part of the overall mission-based effort. You also see a controlled, measurable, repeatable cycle of experimentation and innovation. At level five, the organization is focused, competent, and can innovate freely because they know what they’re capable of and know how new media fits into the overall framework of outcomes.

The trick in working with this framework is figuring out where you are and trying to ratchet forward one level at a time without slipping back. It takes years of effort to move along this path, but I see SFMOMA, the Met, the Library of Congress, the National Archives, the Walker, PBS, NPR, Discovery, National Geographic, and many other kinds of organizations moving along this path. I don’t know of any examples of executives or boards choosing to de-emphasize and un-prioritize the new media line of business, though I’m sure it’s happened somewhere. And I’m fine with that, as long as it’s done in an honest, urgent effort to advance the mission.

Trevor: What do you see as today’s biggest challenges regarding digital media and cultural heritage organizations? Further, what and how do you think we go about meeting those challenges?

Michael: Leo Mullen quipped at the closing plenary of a museum strategy conference that the biggest obstacle to (and I’ll paraphrase) “Organization 2.0” was Organization 1.0. It was a clever thing to say and it got a big and knowing laugh from the audience, but the kernel of truth in it represents the tremendous challenges facing our large “forever” institutions — our memory institutions. We’ve got to last forever, but we’ve also got to be nimble, agile, and fast to have an impact. Sometimes those things—those different value systems, can seem hard to reconcile.

How to meet that challenge? Develop and hone a strong sense of mission, a strong vision of the impact you want your organization to have in society, and then ruthlessly measure progress towards that vision every single day. If you need more “new media,” more digitization, more crowdsourcing to get that impact, then remove the obstacles and get going. If you need less new media, get rid of it. The things that matters are impact and outcomes.

When organizations are struggling with this I invoke “the one year rule.” I tell them this: think about a gathering, a staff or executive meeting a year from now- what do you need to have accomplished? What do you need to have nailed, crushed, succeeded at or you will have to resign in shame? Name those things now. Measure progress towards them every day. And get them done. Most organizations find that with focus and effort, the most important things take months to get done, not years, and the team finds the tangible progress and accomplishment exhilarating. Liberating. Sometimes life changing. I always evoke the motto of social entrepreneurship: think big, start small, move fast.

Trevor: What parts of our standard practices at cultural heritage organizations do you think we should be radically rethinking? Are there any key parts of our organizations that you think just persist unchanged which we should be seriously re-evaluating?

Michael: “Radical” is a pretty loaded term. What we’re doing isn’t radical. It’s pretty practical, given what’s changing in society, what will change in the future, and what’s at stake.

In this epoch we should be rethinking, re-evaluating, everything, always. That’s not radical, that’s pragmatic. That’s liberating and realistic. But it’s not a license to navel gaze. Let’s get something done. Something that will matter, for a citizen, tomorrow, and something enduring that will matter 100 years from now.

I think the most important re-thinking we’re doing now is around our traditional intellectual property policies, and the ways in which we can encourage and celebrate the use, and re-use, of “our” resources by citizens—by everyone—for the benefit of society. If we get it right, if we begin to form a new appreciation for how our work relates to the giant mashup that is knowledge creation and cultural participation in the digital age, then our descendants will remember us with smiles on their faces. Our institutions will endure.

How Do You Staff Your Digital Preservation Initiatives?

The following is a guest post by Jimi Jones, Digital Audiovisual Formats Specialist with the Office of Strategic Initiatives.

Digital preservation is an emergent field. Businesses, cultural memory institutions and government bodies that want to responsibly preserve and generate digital assets face significant challenges with respect to staffing.

Banquet celebrating the newly hired motormanettes, conductorettes, coachettes and driverettes of LARy by user metrolibraryarchive on flickr

How many staff do we need? What types of positions are needed? What skills, education and experience should we be looking for? Should we hire new staff or retrain existing staff? What functions should be scoped as part of the program? What should be provided by other parts of the organization, outsourced or provided through collaboration with other organizations?

The National Digital Stewardship Alliance Standards & Practices Working Group is conducting a survey of organizations currently responsible for digital preservation to gain insight into how organizations worldwide are addressing these staffing, scoping and organizational questions. This survey is open to public, private and government memory institutions.

Here are some examples of questions from the survey:

- Do you participate in any digital preservation consortial or cooperative efforts?

- Which of these activities are considered part of the scope of the digital preservation function at your organization, whether or not you have implemented this activity yet? (Respondents can choose from a list including: digitization, metadata creation, fixity checks, secure storage management and much more.)

- Which department(s) take the lead for digital preservation within your organization? If this is a fairly equally-distributed effort choose more than one. (Respondents can choose from a list including: information technology, preservation department, archives and so on.)

- How many FTE do you currently have doing digital preservation in your organization, and how many would be ideal? (FTE stands for “full time employees.”)

- For in-house staff, did you hire experienced digital preservation specialists and/or retrain existing staff?

We have received about 60 responses to the survey so far – including some of our NDSA partners. It is our hope that once we collate and interpret results we can provide valuable information for institutions that are embarking upon digitization and digital preservation programs and/or projects. Results from surveys like these may even become fodder for best practices with respect to digital preservation staffing.

We are excited to announce that we will be presenting the interpreted results of the survey as a poster at the 2012 iPres meeting at the University of Toronto, Canada, October 1-5, 2012. From the iPres website: “the iPRES series embraces a variety of topics in digital preservation – from strategy to implementation, and from international and regional initiatives to small organisations. Within this broad topic area, each conference defines a slightly different focus.” We will make the survey findings widely available after the conference. Keep your eyes on the Signal blog for more!

You still have time to take the survey! If you are interested please click here. Only one response should be submitted per institution.

We will make our best effort to protect your individual survey responses so that no one will be able to connect your responses with you or your organization. Any personal information that could identify you or your organization will be removed or changed before results are made public. We will combine your responses with the responses of others and make the aggregated results public, and preserve the anonymous data long-term for research purposes.

If you would like to plan your answers before filling out the online survey, you can access the survey worksheet here as a Microsoft Word document. After you take the survey, if you would like a copy of your institution’s responses, please send the request to ndsa [at] loc.gov with the subject line “Staffing Survey” and indicate in the email your institution and the IP address from which you submitted the survey.

We greatly appreciate the responses we’ve received from this survey. The Standards & Practices Working Group believes that making the results available can help to demystify the processes involved in putting together a digital preservation program or project for the relative newcomer to the field.

Act now! The survey will close on August 17, 2012.

Note: This post was edited on 8/9/12 to include the name and title of the author.

Record Crowd Participates in DigitalPreservation 2012

Apps that want to be good. Messiness and meaning. Mature-and immature-organizations.

Anil Dash, credit: Abby Brack, Library of Congress

The Library of Congress provided a forum for innovative insights during its annual digital preservation meeting, held during July 24-25. DigitalPreservation 2012 drew record attendance from across the country and around the world.

The Library’s National Digital Information Infrastructure and Preservation Program organized the meeting to meet several goals. One was to hear from prominent technologists and thought leaders. Another was to bring together Library partners and others to share learning and best practices. Yet another was to extend collaborative ties to improve digital stewardship in the service of advancing knowledge and creativity.

A few highlights indicate some success in meeting each of these goals.

The gathering opened with Anil Dash speaking on the value of the open web for digital preservation. Dash, a well-known blogger and tech entrepreneur, described himself as “a geek interested in the social impacts of technology on culture and government.” He has a strong interest in public policy and stated that archivists and librarians are grappling with issues that technology community knows little about. Dash warned that proprietary applications lock up content and put it at serious risk of loss or misappropriation. The way around this involve linking apps to the web, which permits copying and preservation. While this is now the exception, there is some hope for optimism. “There is a growing class of apps that want to do the right thing,” Dash said. He called upon the digital preservation community to engage more effectively with technologists to raise awareness and push for change.

David Weinberger @ Veneziacamp2009 – 3, by ialla, on Flickr

David Weinberger, best-selling author and senior researcher at the Harvard Berkman Center for the Internet & Society, talked next about the dramatic change that the web has brought to ideas about information and knowledge. Until recently, he noted, knowledge was constrained and localized in the service of “managing, filtering, reducing and winnowing information to reach definitive answers.” With the web, knowledge has been set free to grow and evolve in networks. This compels us to accept the messiness of information on the web, and move away away from defined answers. “Messiness is how you scale meaning… disagreement is how you scale knowledge.” And, despite the vast size of the web, it can never represent “everything,” which lessens concerns about digital preservation-although Weinberger urged those efforts to continue. Weinberger also live-blogged the presentations of other speakers.

Michael Carroll, American University Law Professor and Creative Commons board director, compared digital preservation to environmentalism, in that both entail stewardship of valuable resources as well as long-term planning. Both also call for institutional incentives. Carroll noted that while concerns about intellectual property law can serve as a disincentive for digital stewardship, he stated that libraries and archives should feel free to capture as much content as they can right now. Allowing access to that content may take time to permit crowdsourced-metadata as well as resolution of copyright issues, he said, but saving the material is a critical first step. Carroll said that such capture could be justified under legal concepts of both fair use and free speech. He urged the preservation community “to organize itself as the voice of tomorrow’s users on issues of copyright policy and copyright estate planning.”

Poster Presentation, credit: Abby Brack, Library of Congress

Bram van der Werf, Executive Director of the Open Planets Foundation, presented on Assuring Future Access, from Infancy to Maturity. He called for more effort into “preventative maintenance for digital collections and the software needed to preserve them.” The extent to which an institution is capable of this can be see as a function of what van der Werf referred to as digital preservation maturity. Immature organizations, for example, generate orphan data and “abandonware” software tools. He stated that the key element was developing capable, well-trained staff to populate a “mature community of merit with many motivated people empowered and rewarded by their organizations.”

The day wrapped up with a poster session designed to shared information and spur discussion about broadening collaborative efforts. Many of the presenters outlined work in relation to the National Digital Stewardship Alliance, a recent NDIIPP initiative to extend the Library’s digital preservation partnership network. Presentations included Digital Preservation in a Box: Outreach Resources for Digital Stewardship; Digital Preservation Policy Development at the Library of Congress; Developing Case Studies for At Risk Content; and Teaching Digital Preservation in a Digital Curriculum Laboratory.

CurateCamp Processing, by wlef70, on Flickr

The following day featured plenary sessions on big data, preserving digital art and culture, and perspectives on digital preservation projects from federal grant funders. A series of breakout sessions followed to demonstrate new digital preservation tools from the Library and partners such as the National Archives and Records Administration, University of Virginia, Harvard University and the State Library of North Carolina. There were also small group discussions on Preserving Electronic Records in the States, Defining Levels of Preservation, and Assessing and Mitigating Bit-Level Preservation Risks.

In association with the meeting, the Library sponsored a CurateCamp on July 26 to focus on two different notions of “processing”: archival processing and data processing. Following an unconference model, participants organized into a series of small group discussions to consider issues such as processing digital acquisitions; defining and extracting essential characteristics for digital objects; and options for repository software. The talks generated lively discussions that were documented on the event wiki.

Notes and presentations from DigitalPreservation 2012 will be made available on the NDIIPP website as they become available.

Digital Preservation 2012: The Power of Community

In a world of video and web conferencing, text messaging, email, immersive communications and (almost forgot!) telephones, we seem to have eliminated the practical need to ever meet with people face-to-face.

Tim O’Reilly at the 2011 NDIIPP/NDSA Meeting. Photo credit: Abby Lewis

So why even have a meeting like we’re holding this week, the Digital Preservation 2012 conference? (July 24-26 at the Sheraton Pentagon City in Arlington, Va in case it slipped your mind…).

While Web 3.0 technologies will undoubtedly make our lives much easier, they’ll never replace the power of real community achieved when people get together in person to discuss issues, share ideas and work together on solving shared problems.

Plus, meetings are fun, entertaining and educational!

This is certainly the case for the Digital Preservation 2012 meeting. The meeting kicks off on Tuesday July 24 with a series of public presentations from some of the most insightful commentators on digital culture.

Anil Dash by user mirka23 on Flickr

It starts with Anil Dash, the founding director of Expert Labs, an organization that helps agencies in federal, state and local government listen to the ideas and insights of citizens. Dash is “an entrepreneur, writer and geek living in New York City, obsessed with the ways that technology shapes and transforms culture, media, government and society.” He’s also a very dynamic speaker exploring a variety of technological innovations that promise to shape the way people communicate.

We then turn to David Weinberger, a senior researcher at the Berkman Center for Internet and Society at Harvard University. “Senior researcher” really fails to capture the range of his activities and interests. He’s the author of a number of influential books, including The Cluetrain Manifesto, Small Pieces Loosely Joined and Everything Is Miscellaneous: The Power of the New Digital Disorder, as well as a frequent commentator on National Public Radio’s All Things Considered and a frequent author in business and technology journals. If that weren’t enough, he’s also the Co-Director of the Harvard Library Innovation Lab and was a Franklin Fellow at the United States Department of State.

But wait, there’s more!

Michael Carroll CC Summit 2011 Warsaw by user kalexanderson on Flickr

Next up is Michael Carroll, Professor of Law and Director of the Program on Information Justice and Intellectual Property at the American University Washington College of Law. He’s a founding member of Creative Commons, a nonprofit organization that enables the sharing and use of creativity and knowledge through free legal tools. His research focuses on the search for balance in intellectual property law over time in the face of challenges posed by new technologies.

Finally, joining us from Europe is Bram van der Werf, the Executive Director of the Open Planets Foundation. OPF was founded in March 2010 to provide practical tools, solutions and expertise in digital preservation, building on the investments made by the European Union and the Planets consortium, which brought together sixteen major European research and national libraries, national archives, leading technology companies and research universities to improve decision-making about long-term preservation, ensure long-term access to valued digital content and control the costs of preservation..

And that’s just day one!

Day two, Wednesday July 25, includes main room panel discussions on “Big Data Stewardship,” “Preserving Digital Culture” and “Funding the Digital Preservation Agenda,” along with breakout sessions on a huge variety of digital preservation, curation and stewardship issues, including demos of some of the most innovative current preservation tools.

17 August 2010 by user carmendarlene on Flickr

If possible, day three, Thursday July 26, is even more interesting because you get to help set the agenda. NDIIPP is co-facilitating CurateCamp: Processing with folks from the National Archives and Yale University. CurateCamps are a series of unconference-style events focused on connecting practitioners and technologists interested in digital curation. CurateCamp:Processing will explore both the “computational” and “archival” senses of processing, and bring together archivists and curators with software developers and engineers to do some creative thinking and tinkering.

Three days, man! Three days! The Digital Preservation meeting only happens once a year, so we cram in as much activity as we can.

Follow the action at #digpres12 on Twitter, but attend in person if you can. There’s nothing like the power of face-to-face community.

Repositories: Not Just About Publications Any More

Not that repositories ever really were only about published scholarly output, but for some organizations that was the easiest first bar to reach. But at Open Repositories 2012, it was clear that the bar has been raised.

Presenting on big data and digital collection repository development at LC, photo by eurovision_nicola at http://www.flickr.com/photos/eurovision_nicola/7546008032/

OR2012, held at the University of Edinburgh from July 9-13, 2012, had over 480 registered attendees from over 40 countries. The participants included developers, librarians, library administrators and service providers. The topics were, as usual, quite wide-ranging, including calls for increased open access to scholarly publications, introductions to core repository technologies, presentations on new types of repository services, the need for name identifiers/disambiguation and the ever-popular developer challenge. The entire conference was live-blogged, which provided some remarkable coverage. And there is a tweet archive for the tag #or2012. Oh, and there is a Flickr pool.

But if there was one word that that was woven into almost every presentation, it was this: DATA.

Which made me very happy. Because anyone who reads my posts here or has seen my speak anywhere in the past 9 months knows that I go on and on about two things: Library, Archive, and Museum collections are now being mined as data by researchers, which requires new management strategies and new self-serve services. And, consequently, cultural institutions all have big data and need different IT infrastructures for the processing and serving of these collections. And I said as much in my presentation at OR2012 (Check out the live blog post from the session I spoke in).

Just about everyone was discussing RDM, or Research Data Management. It has become clear that institutional repositories must not only manage scholarly publications, but the data that was created through observation and experimentation or collected and published, in order to support the “re-” activities: review, reuse, replicability and reproducibility. RDM platforms are needed to help researches capture and share and publish their datasets. The public-facing discovery infrastructure is but a small part of this effort: the greater need and effort is in capturing data from the original instruments and formats and the transfer and documentation of datasets in a reliable, documented way to support a forensic level of authenticity for future researchers. The Digital Curation Centre has a great blog post reviewing some of the sessions on this topic.

Piped into the OR 2012 Reception, photo by eurovision_nicola at http://www.flickr.com/photos/eurovision_nicola/7553134454/

Another word which was everywhere was “identifiers.” Disambiguation of researchers/authors has been a known issue since the earliest Institutional Repositories, where one publication might be by “Leslie Johnston,” and another by “L. Johnston,” and another by “Leslie L. Johnston,” depending upon the publication’s stylebook. Is that the same person to someone searching for all my publications? ORCID is the more mature service in the assignment and resolution of identifiers, but the status of ISNI, aka ISO 27729, was also presented. There will likely never be a single unique identifier, as there are these two international services, national services, and institutional services. The catch will be crosswalking between all the identifiers. The same can be said for article or item identifiers, such as the DOI, which has a high level of buy-in in the publishing realm, but uneven adoption for other types of objects.

There was also a lot of discussion about scale. Not a lot of solutions yet, but a lot of discussion. I heard some very promising presentations about optimization for Solr and the use of noSQL databases.

Linked Open Data was, unsurprisingly, a topic of discussion. The opening plenary by Cameron Neylon from the Public Library of Science very much emphasized this point: “It’s about links; it’s about connectedness.” And it’s not just linking between objects and repositories, but synchronization between them. Some very interesting early work was presented on the Webtracks and ResourceSync projects.

A number of NDIIPP partners presented their projects at Open Repositories, including Duracloud and Chronopolis.

I can never say enough about the Developer’s Challenge at OR, where developers pitch ideas, refine the ideas, and often develop code in but a few hours. DevSCI, which sponsored the challenge, covered the event and the winners.

I would encourage anyone interested in any of these topics to read through the comprehensive session live-blogging, and to check out videos on the OR2012 YouTube channel. My own session is apparently missing, due to unrecoverable file corruption (really).

Digital Disaster Planning: Get the Picture Before Losing the Picture

The following is a guest post by Chelcie Rowell, 2012 Junior Fellow.

Frequency of occurring? Rare. Impact of occurring? Huge. I’m talking about digital disasters.

Zine Symposium – Russell Square London 2006, by szczel, on Flickr

Stewards of digital content, like stewards of analog content, must plan for catastrophe in advance in order to minimize loss and recover quickly. True, digital disasters may occur infrequently. But at the scale that institutions collect digital content and for the length of time that institutions wish to preserve digital content the risk of a disaster is non-trival.

Disasters may be natural (such as tornadoes and earthquakes) or failures of infrastructure (such as power failures). Disasters may result from intentional human action (such as cyberterrorism) or simply human error (such as accidental deletion).

A digital disaster negatively impacts an institution’s digital content. What distinguishes many catastrophes that threaten digital content from those that threaten analog content is that digital disasters may be much less visible. Bit rot is a one-in-a-million occurrence, for example, but when it happens special tools are needed to seek it out and prevent a digital disaster.

At a recent digital disaster planning workshop, Jessica Branco Colati walked participants through the process of preparing for and recovering from the inevitable. The importance of this is highlighted by two recent headlines that provide concrete examples of the stakes involved with disaster planning.

When the website of avant-garde literary website 3:AM Magazine suddenly disappeared, what staff initially believed to be a glitch quickly turned into deeper concern when the service provider responsible for managing the site’s servers was unable to be reached. Editor Andrew Gallix was quoted as saying “I never expected those who were meant to host and back up our content to just switch us off without even telling us.” The extent of the digital disaster was difficult to assess due to crucial failures of communication. Unable to contact their service provider, staff felt powerless to take any action to recover their content. Referring to the missing service provider, Gallix said, “At this stage, we do not know if we’ll every be able to speak to him and if he can switch his server back on long enough to allow us to move 12 years’ worth of content to another, more reliable host.”

Lego Woody, by jamiejohndavies, on Flickr

Pixar faced a digital disaster of comparably catastrophic impact involving the film Toy Story 2. As described by a Pixar technical editor, an accidental deletion wiped the working files before the film was finished. What audiences experience as an animated film is actually a complex digital object that contains thousands upon thousands of smaller files. Combined, these files are rendered into frames—including animation, set, and lighting data—that sequentially make up the moving image.

As the accidental deletion unfolded, pieces of that complex digital object were removed from disk, seemingly before the makers’ very eyes. As Oren Jacob, the film’s assistant technical director, put it “Woody’s hat disappeared. And then his boots disappeared. And then as we kept checking, he disappeared entirely. Woody’s gone.”

Fortunately, the studio was able to quickly restore the film from back ups. But after the back ups were revealed to be corrupt, the only recourse was to inventory different versions of the back up and perform human-intensive quality review to stitch together enough valid data to render a relatively complete film. Jacob recalled, “In the end, human eyes scanned, read, understood, looked for weirdness, and made a decision on something like 30,00 files that weekend.”

Both these episodes raise the issue of risk tolerance. When an institution manages unique digital materials, it needs to seriously consider what steps have to be taken to prevent-or at least minimize-loss?

One Family’s Personal Digital Archives Project

In 1958, Vernon James was an adventurous young man from Colorado who landed a job teaching in Germany for the Department of Defense. During his 16-year stint there, he travelled extensively throughout Europe — including several visits behind the Iron Curtain into West Berlin — and he took lots and lots of photos.

Vernon and Stan James

Decades came and went and in 2005 Mr. James — who was retired by then — decided to scan his European slides along with the other slides and photos he had accumulated over the years. “I was ignorant of scanning when I started this project,” said James. “I had heard about scanners and bought a scanner with a slide attachment and I started scanning all of my slides.”

The scanner did just what Mr. James wanted it to do: it scanned. When he finished the slides he started on photos: from his wife’s year teaching in Ethiopia, from his wedding and more…a lifetime of personal photos. “After that we started scanning everything I had in the house,” said Mr. James. “I scanned everything from my birth certificate to things from my early childhood and little clippings in the local newspaper,” Mr. James said.

Vernon James (on tricycle) with his brothers and his parents. (1931)

“I had a brother, Bob, who died in a Japanese prison camp in 1942 and my mother had saved the letters and memorabilia from him and I scanned all of those.

“I scanned letters my wife had written when she was overseas. And it kept on mushrooming. I kept finding more and more letters and documents. My wife has kept a diary starting from back in 1965 and I think I have 35 years of diaries scanned.” Vernon had built up momentum and was being productive. What could go wrong?

Mr. James’s son, Stan, a game designer and Internet startup founder, was visiting his parents during a summer break from grad school, when Mr. James offhandedly told Stan about his project. “I was excited that he had done this on his own,” said Stan. “And being the tech guy, I looked his project over.”

Stan saw right away that the resolution was set way too low and the photos were in a virtual heap. In fact, one of Mr. James’s challenges in his project had always been organization. He said, “I didn’t have a system. It was just a hodgepodge.” Stan said, “I helped him put the scans into folders by year. And then shortly after that we had to separate by side of the family.”

Vernon James and his mom (1945)

After resetting the scan resolution and organizing the files, Stan bought his father a hard drive on which to store and preserve his stuff. And from then on Stan was involved with the project, out of personal and professional interest.

It’s not that Mr. James did anything horribly wrong. In fact, he’s a smart man who he did the best he could with the little information he had. The problem was more a scarcity of consumer-friendly personal archiving information that clearly addressed what Mr. James and millions of others were trying to do — create a digital archive of their personal stuff. And Stan knew that if he and his dad were to work together, Stan had to keep his suggestions and information simple in order to avoid overwhelming his father.

From the early days of the project, Mr. James had diligently typed comments into his photos. Stan was shocked to find that the software Mr. James was using, the software that came with the scanner, was actually engraving the captions right into the photo image, not adding the captions into the back end of the file, as it should’ve. Hundreds of photos were marred with captions. Stan said, “It was almost as if you were to take a Sharpie pen and write on top of a print.

“I searched for a better program and eventually settled on Picasa, partly because it worked cross platform. I was on a Mac and he was on a PC but we could both still use Picasa. And Picasa uses standard formats for writing things like captions and geotag information.”

Vernon James at the ruins of Hitler’s bunker. (1959)